Two Powerful Earthquakes Did Not Hit Northern California, Automated Quake Alerts Fail USGS, LA Times After Deep Japan Quake

SAN FRANCISCO (CBS SF) -- Two powerful earthquakes, measuring 4.8 and a 5.5 magnitudes did not hit San Simeon and Brooktrails early Saturday morning. The widespread reports of the quakes are the result of a glitch in the systems used to quickly spread the word about damaging tremors.

Automated earthquake sensors, and the text messaging, email alerts, and automated Twitter alerts associated with it again appeared to malfunction for the United States Geological Survey and the media outlets that have built automated systems to report them to readers.

A real quake did hit, a massive magnitude 7.8 struck the open ocean hundreds of miles south of Tokyo at 4:23 a.m. Pacific time, over 400 miles beneath the earth's crust, sending shockwaves of seismic energy around the globe.

About fifteen minutes later, the USGS sent out two alerts, one about a quake later revised to a 5.5 near Brooktrails, California and another about a San Simeon earthquake.

The alerts also triggered the LA Times "Quakebot" algorithm to write two stories, which were then read by a human editor and published.

The problem is these reported events never happened, and the alert for the actual quake off Japan occurred about five minutes after first two false alerts.

A USGS geophysicist explained that the Japan quake was so deep and strong that it sends ripples of energy around the globe, and the Northern California Seismic Network picked up those spikes from the initial waves and falsely reported them as additional quakes. The problem is likely to recur whenever a strong and deep quake happens.

For a 5.5 magnitude quake, significant localized damage would have been expected, but instead the rural area north of Healdsburg slept calmly on through the automated earthquake reports.

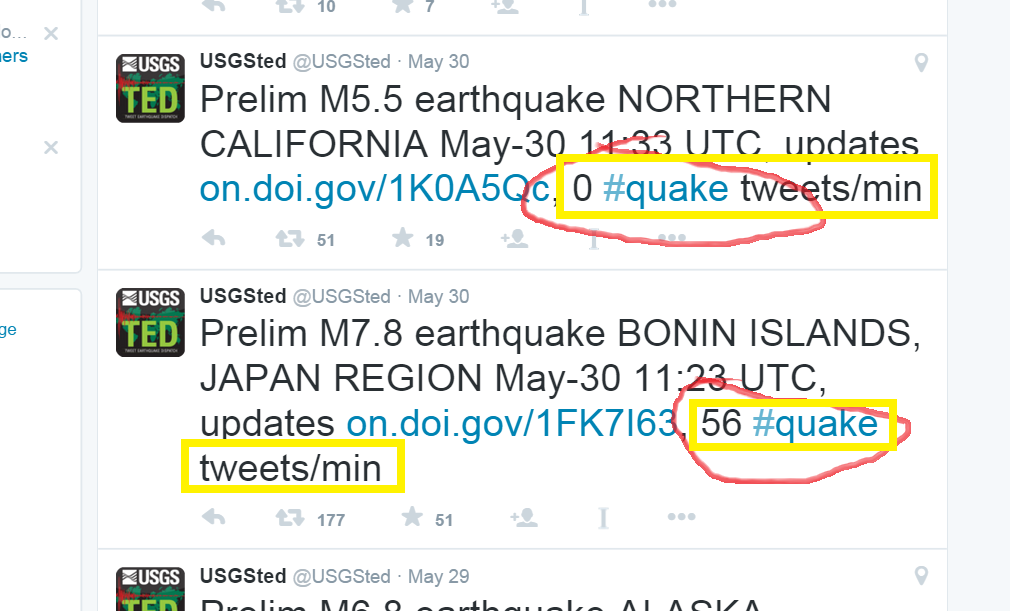

Both @USGSted tweets about the non-quakes indicated that there were zero tweets per minute using the hashtag #quake. With quakes of that size near populated areas, this "#quakes" count is one way to tell if the automated USGS Tweet is accurate.

To make automated services like the LA Times' "Quakebot" accurate, taking into account how many real people tweeted with the #Quake hashtag before the alert could help reduce erroneous reports.

The actual quake off Japan had 56 "#quake" hashtag usages per minute by the time the alert went out.

With a major quake, Tweets will often beat all the automated services in reporting a quake quickly, and sometimes more accurately.

When the 6.0 Napa earthquake hit on August 24th last year, the first online report of the quake came not from a government sensor or a media outlet with software to re-tweet or re-post information, but from a guy who grabbed his smartphone and quickly tapped out six characters at 3:21 a.m., while the earthquake was still rolling. This beat the USGS automated alerts by minutes.

The two Saturday quakes were removed from the large interactive quake map on the front page of USGS.gov that morning, however event pages for the quakes still existed well into the day and it took another several hours for a "deletion notice" to be sent out. The stories about the two non-quakes continued to exist on the LA Times website and on several media sites that pull data or RSS feeds from the USGS or the LA Times stories well into Saturday.

The official automated USGS tweets and the tweets from @latimes and other media outlets and re-tweet services continue to ricochet in social media, being retweeted dozens of times as there is no way to edit or modify a sent tweet. The only option would be to delete it. Headlines associated with a tweet do automatically update, however, as seen in the LA Times tweets.

ACTIVE TWEET FROM @USGSted:

@LATimes TWEETS

The same chain of events occurred Friday, when the USGS, the Associated Press and the LA Times reported an earthquake with magnitude of 5.1 hit the Redding area after a real and very powerful earthquake struck the coast of Alaska.

The Associated Press confirmed Friday morning that this was a "...erroneous alert based off an earthquake that struck in Alaska" and killed the story from its wire service.

As with Saturday's false reports, the event record of the earlier California quake vanished from the USGS seismic activity map, but remained on Twitter, and the LA Times story remained for hours before being updated.

"Quakebot is programmed to extract the relevant data from the USGS report and plug it into a pre-written template. The story goes into the LAT's content management system, where it awaits review and publication by a human editor," Slate reported in a 2014 article about the newspaper's use of the computerized journalism technology.

The Los Angeles Times confirmed to CBS SF that a human editor continues to review all Quakebot stories. In Friday's case, a "deletion notice" was not sent out through the automated government systems.

A Times spokesperson tells CBS SF that the newspaper's editors are working with the USGS on coordinating the two automated systems to ensure the data is accurate, but while those conversations may have begun Friday, the Japan quake tested them again and the USGS false report issue struck a second and third time the next day.

The article with headline and excerpt describing the Friday non-quake near Redding continue to exist on the LATimes.com website, but a paragraph was added reading: "10:57 a.m.: This post is incorrect, with the USGS reporting that sensors in California misidentified seismic activity from a magnitude 6.7 quake that struck earlier in Alaska. The USGS reported there was no earthquake in the area at the time reported." Later Friday, the headline was later updated as well.

The false reports of the San Simeon and Brooktrails quakes remain reported as actual quakes on LATimes.com as of 7:00 a.m. Saturday.

Journalist and programmer Ken Schwencke designed "Quakebot," but left the Times in 2014. The system is described in depth here in the Columbia Journalism Review.

Automated data feeds are increasingly common, and news outlets including the Associated Press are using them to automatically write and share everything from stock updates to NCAA sports stories.

The challenge for journalists is knowing when to immediately post a story based on automated data, and when to wait, and correlate it with actual reports. With earthquakes, Twitter is often the best judge of a quake.

COMPLETE QUAKE COVERAGE: CBS Earthquake Resource Center

LIVE QUAKE MAP: Track Real-Time Hot Spots